A new paper titled “On predicting climate under climate change” has been published in Environmental Research Letters. The paper, co-authored by myself and David Stainforth from the London School of Economics, suggests that current approaches to climate modelling are inadequate for providing probabilities of future climate change.

While ensemble climate prediction methods are becoming more commonplace, given practical computational constraints it is very expensive and time-consuming to run an operational climate model more than a handful of times. However, as computational capacity increases, the possibility of running large ensembles becomes more feasible. In order to ensure that such future climate model experiments are informative for both science and society, we need to understand how we can best design the experiments to explore the different sources of uncertainty and how to provide meaningful quantitative output to inform probabilistic climate risk assessments.

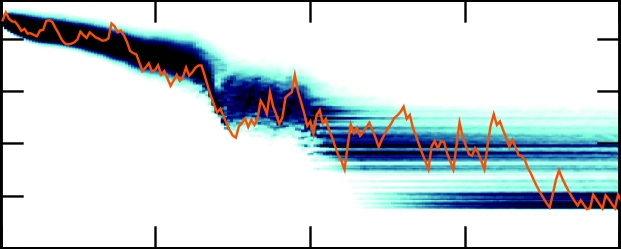

In our study, we explored the behaviour of an idealised low-dimensional nonlinear chaotic model, analogous to the coupled ocean-atmosphere system. Using such a model, we were able to run very large (multi-thousand) member ensembles to explore the impact of varying initial conditions and model parameters on the evolution of the system. Results from such experiments cannot be assumed to provide quantitative climate prediction information but they can provide qualitatively relevant output that aid understanding of the limitations of current assumptions and modelling approaches.

Our results show that the use of a temporal definition of climate, which use time-series’ from single or small ensembles of simulations, cannot reliably quantify the climate distributions of a climate model, both under stationary and non-stationary forcing conditions analogous to an unforced and forced climate system respectively. Our findings imply that for seasonal to multi-decadal predictions we ought to run multi-hundred (or larger) initial condition ensemble experiments irrespective of whether the climate is changing; under climate change, assumptions about the ability of single simulations to quantify climate distributions simply get worse. We also demonstrate that to reliably distinguish between internal variability and climate change in a specific climate model, we require large initial condition ensemble experiments. This is the case whether our aim is to i) understand our models, ii) support scientific understanding of the climate system, or iii) provide information which is valuable in guiding policy decisions.

The results extend a large body of previous work which has utilised low-dimensional models to make inferences about the climate system and inform the design of modelling experiments. While previous studies have typically focussed on time-invariant systems, in trying to understand the climate system under altered forcing conditions, we examined the behaviour of a nonlinear system under time-varying conditions. The results also relate to previous work which has attempted to quantify the relative roles of different sources of climate prediction uncertainty. We illustrate the need to reconsider assumptions about the contribution of initial conditions to the total climate prediction uncertainty.

Moreover, the work is framed in the context of real-world adaptation and mitigation decision problems. We attempt to bridge the nonlinear dynamics, climate modelling and adaptation communities, making our findings relevant for those who are tasked with basing decisions on model estimates of future climate risk.

If you’d like any more information about the study, feel free to contact me – jdaron@csag.uct.ac.za.